Index of Languages

FORTRAN (~1953)

LISP (1958-)

ALGOL (1958)

COBOL (1959)

SIMULA (1964-1967)

PASCAL (1968/70)

ML (1973)

The C Programming Language (1972)

ADA (~1978/79 ->)

C++ (1979)

SmallTalk (1971-80)

Objective-C

SQL

Perl

Erlang

Python

PHP

Haskell

Java

JavaScript (JS)

TypeScript

OCaml

Scala

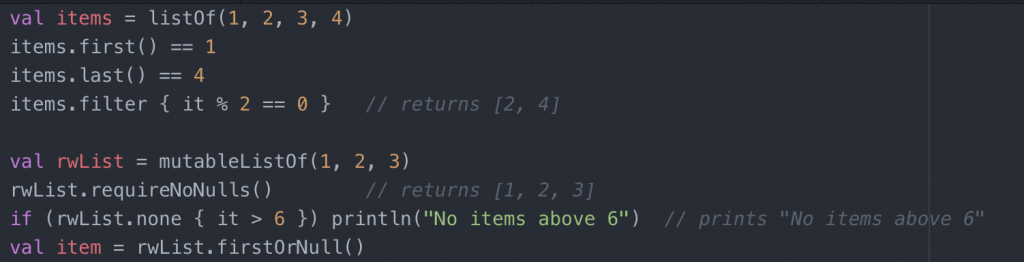

Kotlin

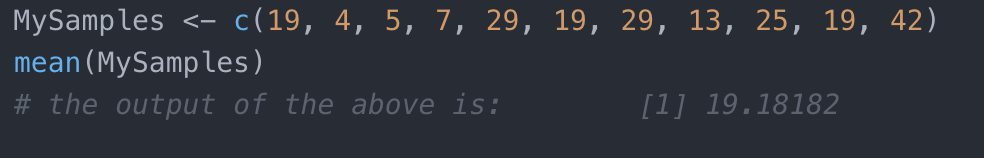

The “R” Programming Language

C#

Visual Basic .NET (~2001/2)

F#

Swift

There have been many programming languages over the years, often developed by dedicated, passionate, and indeed very geeky individuals who’s passion has been for elegant abstractions of the ones and zeros upon which standard computers run.

Here are there origin stories.

Preamble

Thinking about the history of programming languages, we must travel back to the pre-cursers of modern languages in the form of things such as the Jacquard loom of the early 1800s which was able to make patterns based on cards inserted in the machine (essentially pre-programmed). Beyond that, we can look at the story of Mr. Charles Babbage’s Analytical Engine in the 1830s & 1840s as being also a key point in the history of computer science.

Within this timeline, we often come across the story of Ada Lovelace – a woman credited with writing the “first computer program” on Babbage’s Analytical Engine. Whilst this is an oversimplification of the true story, it’s a story often cited, and indeed it’s where the Ada programming language gets its name from (we’ll learn more about this important but little known language later, and it’s more modern variant Ada 95).

A visit to the Computer History Museum in Mountain View, California will likely give the reader a better understanding of how the story of computer hardware developed with the computer “program” over time (Ref#: A). As they remind us: “Thousands of programming languages have been invented, and hundreds are still in use. Some are general-purpose, while others are designed for particular classes of applications. [Yet] Few new languages are truly new; most have been influenced by others that came before” (https://www.computerhistory.org/revolution/the-art-of-programming/9/357).

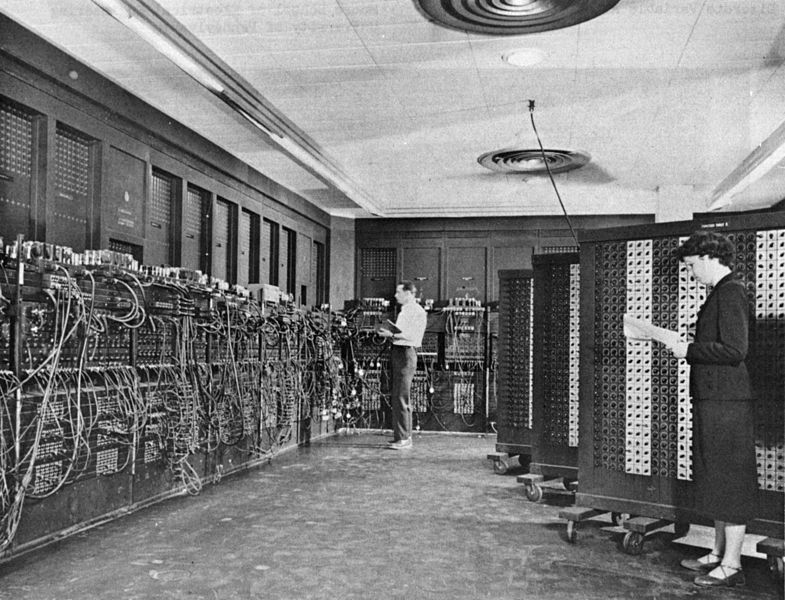

In the 1940s a gentleman named Von Neumann lead a team that built computers featuring the use of stored programs, as well as a central processor.

One of these machines was ENIAC which had to be programmed using patch cords. The involvement of Von Neumann lead to a new binary based machine that could store information, where the ENIAC originally could not.

In the 1940s machine-code was used directly as the sole means of programming computers, however, it wasn’t long before the idea of abstracting on top of this emerged as it was seen as tedious and potentially error-prone.

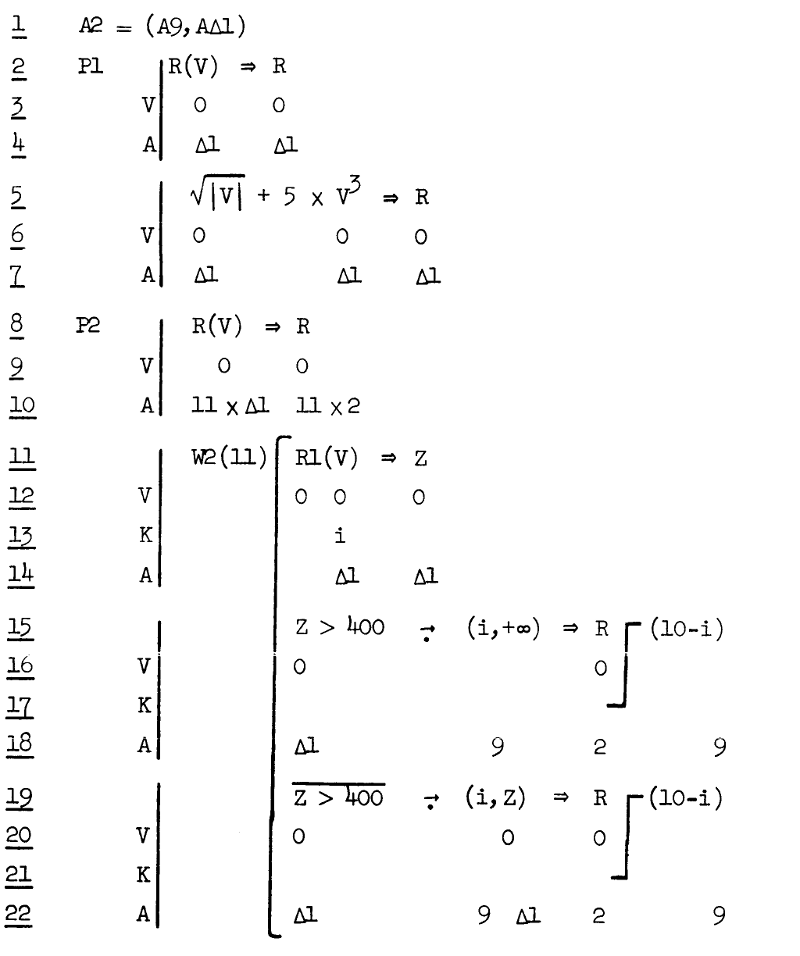

If we talk about high-level programming languages (HLLs), then an early example of one of these was Plankalkül (which means plan calculus). This was developed by a gentleman called Konrad Zuse between the years of 1942 and 1945 roughly alongside his development of what has been called the first “working digital computer”(Ref#: B). This early attempt to develop what we would now call a programming language did not end up with practical uses at the time, nevertheless, it was remarkable how many “standard features of today’s programming languages” Plankalkül had (Ref#: C).

Plankalkül

Another early higher-level language was Short Code. Developed by John Mauchly in 1949 and was originally known as Brief Code, it was used with the UNIVAC I computer after William Schmitt made a version for it in 1950.

Following on from John’s work (and also working on the UNIVAC) Richard K. Ridgway and Grace Hopper developed the A-O System, which is called “first compiler system” in 1951/52, although it was more of a loader/linker compared with a modern compiler.

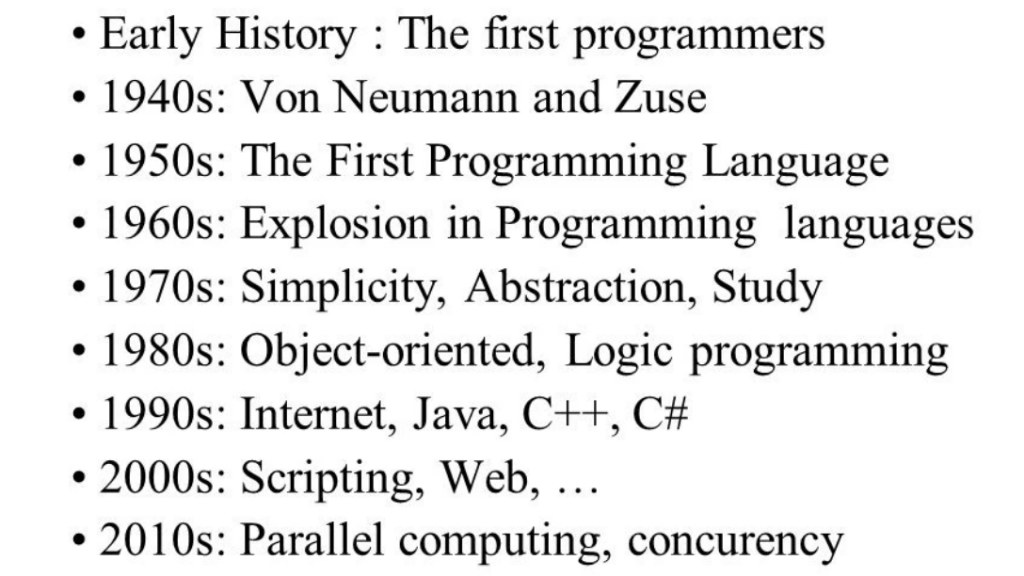

In General, we have seen the following themes emerge across the decades:

(source: https://slideplayer.com/slide/9262894)

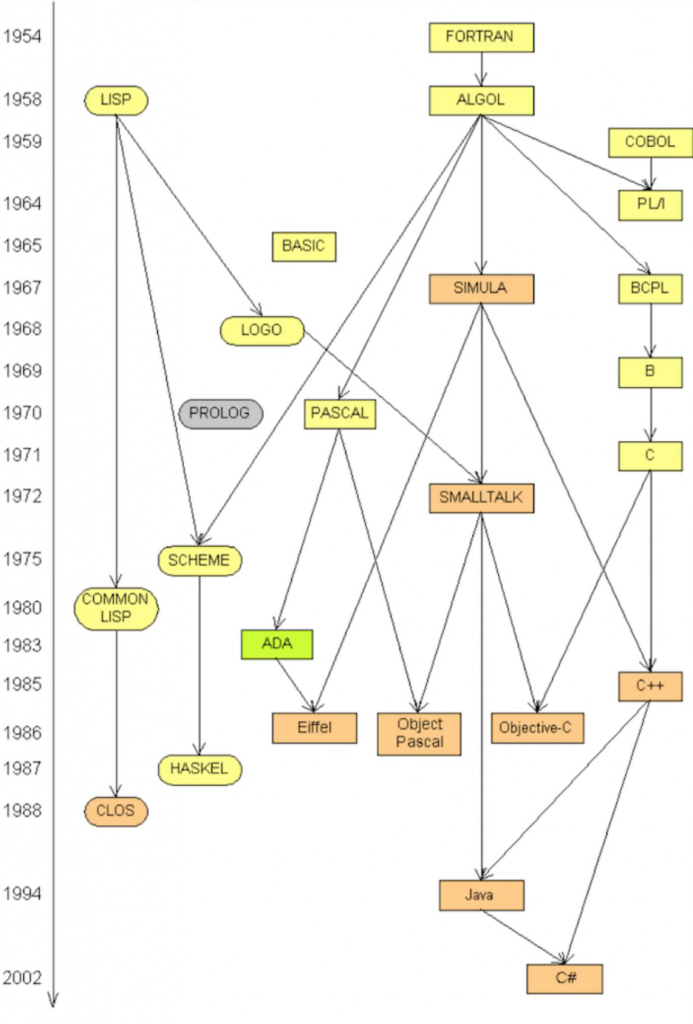

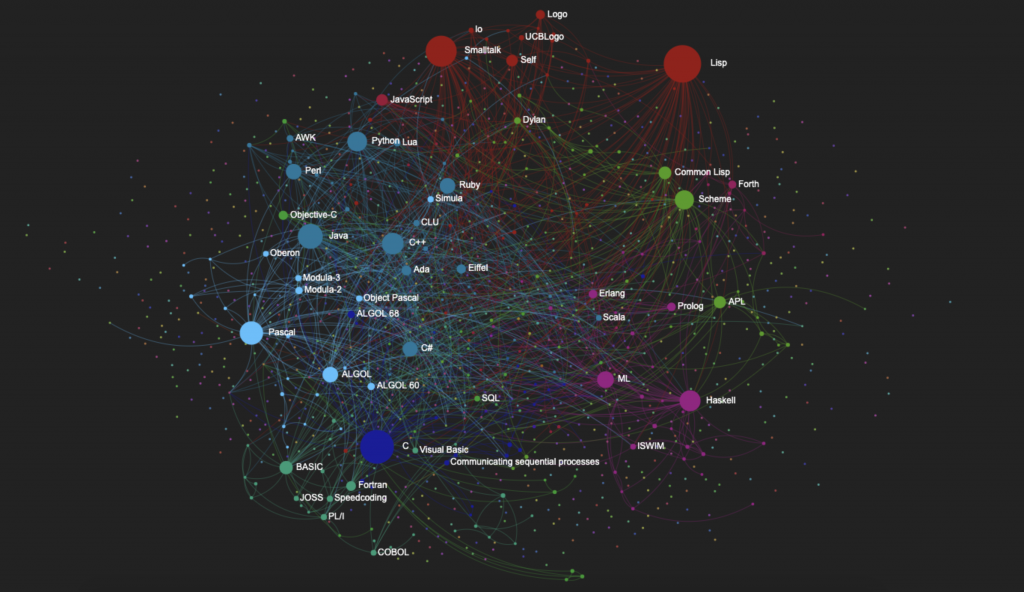

A Visualization Of A Graph DB of Connections Between Languages

source

We’ve summarized the historical context, so let’s move on now to the stories of our main programming languages.

back to index

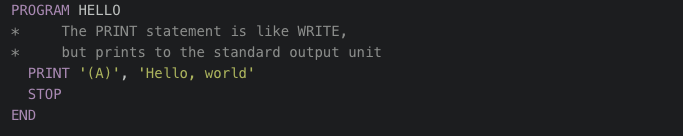

FORTRAN (~1953)

Fortran (or FORTRAN, from Formula Translation) is a general-purpose, imperative programming language that is especially suited to numeric computation and scientific computing.

It was a time of great change and technological advancements, with the world still recovering from the devastating effects of the Second World War. The year was 1953, and music was still in quite a familiar war-time style with popular songs like ‘A Sunday Kind of Love’ by The Harptones and ‘Have You Heard?’ by Joni James. It was also the year when Queen Elizabeth II, who was for many years the beloved reigning monarch of Britain, was crowned Queen following the passing of her father, King George VI. The end of the Korean War was also announced, and the presidency of the United States was handed over to Dwight D. Eisenhower (a 5-star general who had been responsible for planning and executing the successful D-Day invasion of Normandy).

The idea behind this language was to make it a lot easier for people to translate things like mathematical formal into something machines understand but in a way that was intuitive and readable. FORTRAN is considered to be one of the first HLLs (High-Level Languages) to achieve widespread adoption – what this means is that it’s one of the first languages that abstract away the low-level operations of the CPU, thus allowing the programmer to deal more conceptually with the algorithms he or she is writing.

FORTRAN is Procedural

The 1950s were a time of rapid technological advancements and computers were no exception. With their increasing popularity, computer experts sought ways to make these machines easier to use and more accessible to the general public. This led to the development of procedural programming.

Procedural programming involved breaking down complex computations into smaller, more manageable steps called procedures or functions. These procedures could be invoked at any point during the execution of a program and even by other procedures. This approach made writing code much simpler and more efficient.

As a result, several new programming languages emerged, including Fortran, ALGOL, COBOL, and BASIC. Fortran, with its focus on numerical computations and scientific computing, quickly gained popularity and recognition. Its user-friendly and intuitive approach was ahead of its time and continues to inspire programming languages to this day.

Amidst this exciting era, FORTRAN emerged as a truly remarkable development. Brought to life by the experts at IBM, FORTRAN was designed specifically for scientific and engineering applications. Its powerful capabilities and intuitive design soon established it as a dominant force in this field of programming.

For over half a century, FORTRAN has remained at the forefront of some of the most computationally intensive areas of study. From numerical weather prediction to finite element analysis, computational fluid dynamics, and beyond, FORTRAN has proven itself as a vital tool for scientific discovery.

And as the race for greater computing power continues, FORTRAN remains a popular choice for high-performance computing. It is the language behind programs that rank and measure the world’s fastest supercomputers, cementing its place in the annals of computing history.

Unlike a language like ALGOL, FORTRAN was not designed to handle complex functions, including the highly intricate Ackermann Function.

The Ackermann Function is an important example of a recursive function and is used in computer science as a means to demonstrate the capabilities and limitations of programming languages. This function is named after Wilhelm Ackermann, a German mathematician, and is an example of a well-known, but very complex, recursive function.

The Ackermann Function works by defining a sequence of rules, or “recursive calls,” that become increasingly complex and difficult for the computer to process. The function becomes so complex that it quickly exceeds the computational limits of many programming languages, including FORTRAN.

As a result, FORTRAN’s inability to effectively handle the Ackermann Function highlights its limitations in handling complex, recursive functions and serves as an important reminder to computer scientists and programmers to consider the strengths and weaknesses of a given programming language when choosing a suitable tool for the task at hand.

(Ref#: B).

Key People

John Backus

Born in 1924, the same year as IBM itself was born, Backus was a man with a really interesting life story. Backus eventually moved to New York and then began working on key projects for IBM.

The Team

The FORTRAN team was put together gradually, beginning with Irving (“Irv”) Ziller and John Backus, and a short time later they were to be joined by Harlan Herrick, then they hired Robert “Bob” Nelson as a technical typist. Then Sheldon Best from MIT came along, and Roy Nutt from United Aircraft. Subsequently Peter Sheridan, Dave Dayre, Lois Haibt, Richard “Dick” Goldberg etc.

Richard Goldberg, Irv Ziller and John Backus

Irv Ziller

“In late 1953, Backus wrote a memo to his boss that outlined the design of a programming language for IBM’s new computer, the 704. This computer had a built-in scaling factor, also called a floating point, and an indexer, which significantly reduced operating time. However, the inefficient computer programs of the time would hamper the 704’s performance, and Backus wanted to design not only a better language but one that would be easier and faster for programmers to use when working with the machine. IBM approved Backus’s proposal, and he hired a team of programmers and mathematicians to work with him” (SOURCE: E).

The chronology was that it was in 1949 that he began working on IBM’S SSEC computer he then worked in the famous Watson Lab in the period of 1950-1952, and beyond that, he and his team’s work published his work on FORTRAN in 1954.

Language Features

Fortran had what it called Do-loops (which were a bit like for-loops) and what it used to implement programming loops using a counter, and you could nest these. However, it did not support user-level recursion due to employing a single stack-frame, making it a bad solution for something like an Ackermann function which is innately recursive, whereas it could work for something primitively recursive like a Fibonacci calculating function (Ref#: B).

There are actually a bunch of different versions of FORTRAN which have evolved over time:

FORTRAN II appeared in 1958 and looked like this:

SOURCE: Wikipedia

Versions of FORTRAN

There have been several versions of the programming language.

“In 1958, IBM released a revised version of the language, named FORTRAN II. It provided support for procedural programming by introducing statements which allowed programmers to create subroutines and functions, thereby encouraging the re-use of code.

FORTRAN’s growing popularity led many computer manufacturers to implement versions of it for their own machines. Each manufacturer added its own customisations, making it impossible to guarantee that a program written for one type of machine would compile and run on a different type.

IBM responded by removing all machine-dependent features from its version of the language. The result, released in 1961, was called FORTRAN IV” (Ref#: I).

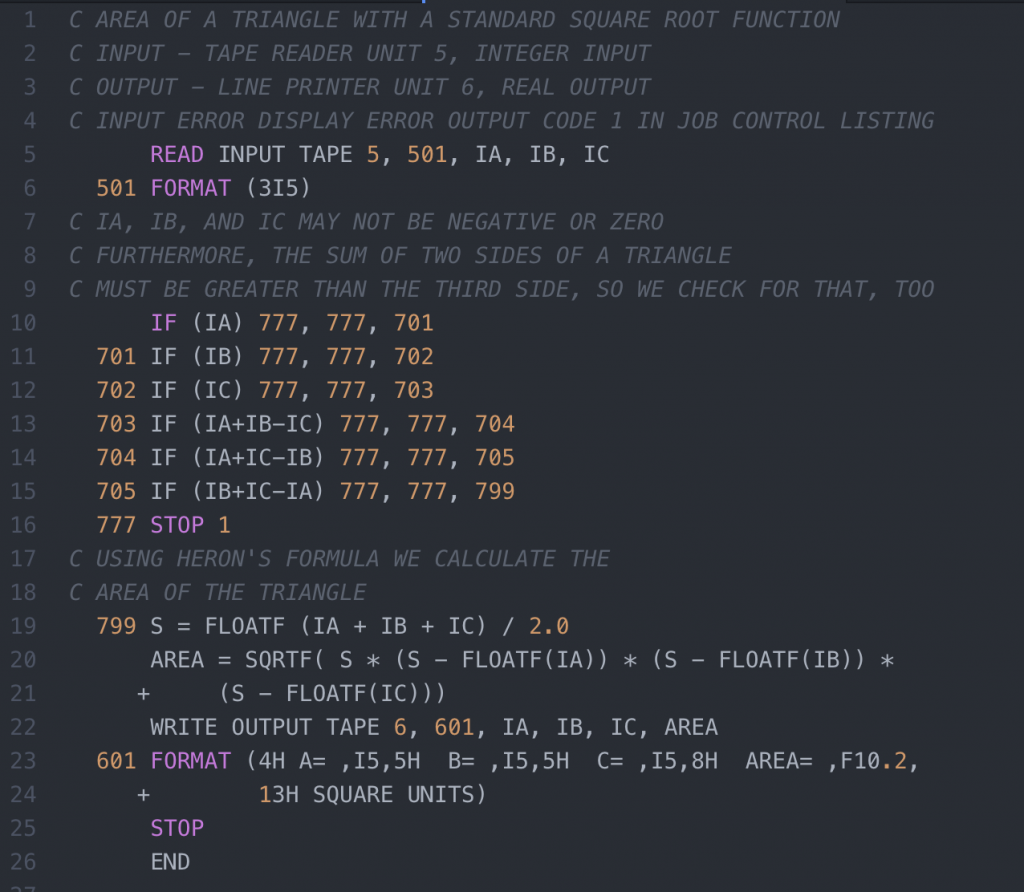

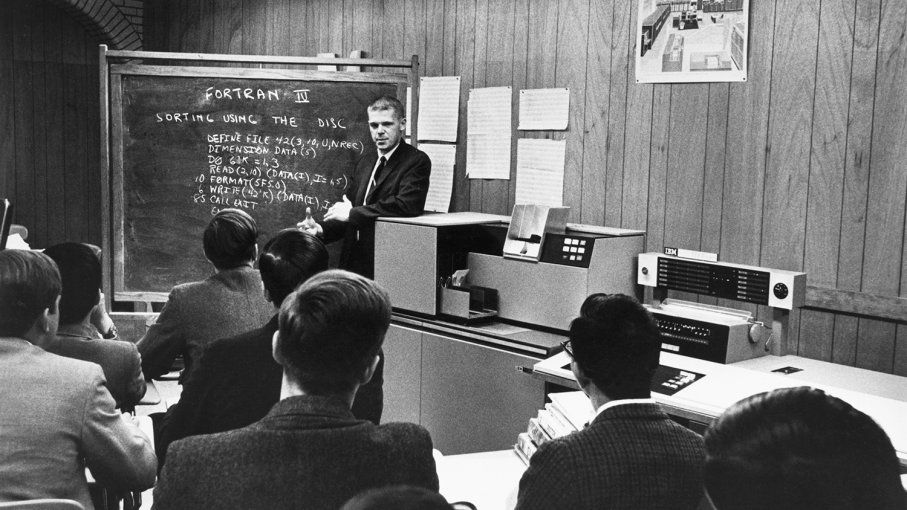

FORTRAN IV

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 |

C AREA OF A TRIANGLE - HERON'S FORMULA C INPUT - CARD READER UNIT 5, INTEGER INPUT, ONE BLANK CARD FOR END-OF-DATA C OUTPUT - LINE PRINTER UNIT 6, REAL OUTPUT C INPUT ERROR DISPAY ERROR MESSAGE ON OUTPUT 501 FORMAT(3I5) 601 FORMAT(4H A= ,I5,5H B= ,I5,5H C= ,I5,8H AREA= ,F10.2, $13H SQUARE UNITS) 602 FORMAT(10HNORMAL END) 603 FORMAT(23HINPUT ERROR, ZERO VALUE) INTEGER A,B,C 10 READ(5,501) A,B,C IF(A.EQ.0 .AND. B.EQ.0 .AND. C.EQ.0) GO TO 50 IF(A.EQ.0 .OR. B.EQ.0 .OR. C.EQ.0) GO TO 90 S = (A + B + C) / 2.0 AREA = SQRT( S * (S - A) * (S - B) * (S - C) ) WRITE(6,601) A,B,C,AREA GO TO 10 50 WRITE(6,602) STOP 90 WRITE(6,603) STOP END |

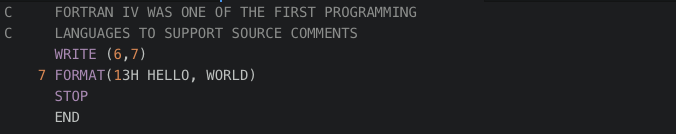

FORTRAN 66

The below example shows the use of the “Hollerith constant”, a way in which early version of the language including FORTRAN 66 dealt with character strings by essentially converting them to some numerical representation; “13H” means that the 13 characters after this will be treated as a “character constant”.

“The space immediately following the 13H is a carriage control character, telling the I/O system to advance to a new line on the output. A zero in this position advances two lines (double space), a 1 advances to the top of a new page and + character will not advance to a new line, allowing overprinting” (SOURCE: F).

FORTRAN 77

FORTRAN 90 (95)

This added many of the features of more modern programming languages, including support for recursion, pointers, CASE statement, parameter type checking, and many other changes.

SOURCE: F

Conclusion

FORTRAN was made for the use of mathematicians and scientists and indeed it still to this day enjoys a certain level of popularity in being used in these areas, indeed it still is widely used by Physicists and “in a survey of Fortran users at the 2014 Supercomputing Convention, 100% of respondents said they thought they would still be using Fortran in five years”(Ref#: J). In fact product in 2019 were still being produced with support for a version of FORTRAN such as “Intel Parallel Studio XE 2019” (Ref#: G), and also the “OpenMP” API which is used to explicitly direct multi-threaded, shared memory parallelism, these were designed for shared-memory machines, this relates also the topic of Parallel Computing (Ref#: H).

References

A: https://www.youtube.com/watch?v=KohboWwrsXg

B: https://www.youtube.com/watch?v=HXNhEYqFo0o

C: https://chet-aero.com/downloads/fortran-77-resources/

D: https://www.youtube.com/watch?v=KNEYtu48iyU

E: https://www.thocp.net/biographies/backus_john.htm

F: https://en.wikibooks.org/wiki/Fortran/Fortran_examples#FORTRAN_66_(also_FORTRAN_IV)

G: https://www.youtube.com/watch?v=IdwBeNeIR9o&t=

H: https://computing.llnl.gov/tutorials/openMP/

I: https://www.obliquity.com/computer/fortran/history.html

J: http://moreisdifferent.com/2015/07/16/why-physicsts-still-use-fortran/

K: https://www.youtube.com/watch?v=uFQ3sajIdaM

LISP (1958 -)

LISP is a symbolic language that can be challenging to learn which is used in academic circles for applications with main uses in artificial intelligence.

“Lisp (historically LISP) is a family of computer programming languages with a long history and a distinctive, fully parenthesized prefix notation. Originally specified in 1958, Lisp is the second-oldest high-level programming language in widespread use today. Only Fortran is older, by one year. Lisp has changed since its early days, and many dialects have existed over its history. Today, the best known general-purpose Lisp dialects are Clojure, Common Lisp, and Scheme” (Wikipedia).

Lisp is considered Declarative. However, in-fact, Lisp is actually multi-paradigm: it’s procedural as well as functional.

“””

Declarative programming is often defined as any style of programming that is not imperative. A number of other common definitions attempt to define it by simply contrasting it with imperative programming. For example:

- A high-level program that describes what a computation should do.

- Any programming language that lacks side effects (or more specifically, is referentially transparent)

- A language with a clear correspondence to mathematical logic.

These definitions overlap substantially.

Declarative programming contrasts with imperative and procedural programming. Declarative programming is a non-imperative style of programming in which programs describe their desired results without explicitly listing commands or steps that must be performed. Functional and logical programming languages are characterized by a declarative programming style. In logical programming languages, programs consist of logical statements, and the program executes by searching for proofs of the statements.

In a pure functional language, such as Haskell, all functions are without side effects, and state changes are only represented as functions that transform the state, which is explicitly represented as a first class object in the program. Although pure functional languages are non-imperative, they often provide a facility for describing the effect of a function as a series of steps. Other functional languages, such as Lisp, OCaml and Erlang, support a mixture of procedural and functional programming.

“”” (Wikipedia).

Key People

John McCarthy

McCarthy was a key figure in early AI who coined the term “Artificial Intelligence”.

He”developed the Lisp programming language family, significantly influenced the design of the ALGOL programming language, popularized timesharing, and was very influential in the early development of AI” (Wikipedia).

Links to Smalltalk and the History of OOP

“Lisp deeply influenced Alan Kay, the leader of the research team that developed Smalltalk at Xerox PARC; and in turn Lisp was influenced by Smalltalk, with later dialects adopting object-oriented programming features (inheritance classes, encapsulating instances, message passing, etc.) in the 1970s. The Flavors object system introduced the concept of multiple inheritance and the mixin. The Common Lisp Object System provides multiple inheritance, multimethods with multiple dispatch, and first-class generic functions, yielding a flexible and powerful form of dynamic dispatch.”(Wikipedia).

Example Code

Hello World

|

1 2 3 4 5 6 7 8 |

;;; HWorld.lsp ;;; ================================================== ;;; ;;; =========== HELLO WORLD SIMULATION ============== ;;; ;;; ================================================== ;;; ;;; This function simply returns the string Hello World that is in quotes. (DEFUN HELLO () "HELLO WORLD" ) |

Average of Numbers

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 |

setf x (make-array '(3 3) :initial-contents '((1 2 3 ) (4 5 6) (7 8 9))) ) (write x) (defun afisare (i j) (print (aref x i j)) ) (afisare 0 1) (setq a (make-array '(2 2):displaced-to x :displaced-index-offset 5 )) (write a) (setf (get 'student 'age) 43) (setf (get 'student 'cnp) '123456789) (setf (get 'student 'anstudiu) 3) (setf (get 'student 'ioan) 1) (terpri) (write (symbol-plist 'student)) (defun avrege() (terpri) (princ "Enter number 1: ") (setq x (read)) (write x) (princ "enter number 2") (setq y (read)) (write y) (princ "enter number 3") (setq z (read)) (write z) (setq avrege (/ (+ x y z) 3)) (princ "avrege: ") (write avrege) ) (avrege) |

Using Lambdas (aka Anonymous Functions)

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 |

;;; Use LAMBDA to create anonymous functions. Functions always returns the ;;; value of the last expression. The exact printable representation of a ;;; function varies between implementations. (lambda () "Hello World") ; => # ;;; Use FUNCALL to call anonymous functions (princ (funcall (lambda () "Hello World"))) ; => "Hello World" (terpri) (princ (funcall #'+ 2 3 5)) ; => 10 (terpri) ;;; A call to FUNCALL is also implied when the lambda expression is the CAR of ;;; an unquoted list ((lambda () "Hello World")) ; => "Hello World" ((lambda (val) val) "Hello World") ; => "Hello World" ;;; FUNCALL is used when the arguments are known beforehand. Otherwise, use APPLY (apply #'+ '(1 2 3)) ; => 6 (apply (lambda () "Hello World") nil) ; => "Hello World" ;;; To name a function, use DEFUN (defun hello-world () "Hello World") (princ (hello-world)) ; => "Hello World" (terpri) ;;; The () in the definition above is the list of arguments (defun hello (name) (format nil "Hello ~A" name)) (hello "Steve") ; => "Hello Steve" ;;; Functions can have optional arguments; they default to NIL (defun hello (name &optional from) (if from (format t "Hello ~A, from ~A" name from) (format t "Hello ~A" name))) (hello "Dave" "Code Warriers") ; => Hello Dave, from Code Warriers ;;; The default values can also be specified (defun hello (name &optional (from "The Whole World!")) (format nil "Hello ~A, from ~A" name from)) (terpri) (princ (hello "Steve")) ; => Hello Steve, from The Whole World! (terpri) (princ (hello "Steve" "the Aliens!")) ; => Hello Steve, from the Aliens! ;;; Functions also have keyword arguments to allow non-positional arguments (defun generalized-greeter (name &key (from "the World") (honorific "Mrs")) (format t "Hello ~A ~A, from ~A" honorific name from)) (terpri) (generalized-greeter "Jilly") ; => Hello, Mx Jim, from the world (terpri) (generalized-greeter "Jim" :from "the Friendly Squirrel you met last Fall!" :honorific "Mr") ; => Hello Mr Jim, from the Friendly Squirrel you met last Fall! |

Source: Ref#B

Conditional Logic

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 |

;;; Conditionals (princ (if t ; test expression "this is true" ; then expression "this is false")) ; else expression ; => "this is true" (terpri) ;;; In conditionals, all non-NIL values are treated as true (princ (if (member 'John '(John Paul Ringo)) 'Yes 'No)) ; => 'YES (terpri) (princ (if (member 'Pete '(John Paul Ringo)) 'Yes 'No)) ; => 'NO (terpri) |

References

A: https://www.tutorialspoint.com/lisp_programming_examples/

B: https://learnxinyminutes.com/docs/common-lisp/

C: http://groups.umd.umich.edu/cis/course.des/cis400/lisp/crapcode.txt

ALGOL (1958)

Introduction

ALGOL is a high-level language with algebraic style (it’s no longer in current use but influenced languages like Ada and Pascal), its main use was in mathematical work.

The year was 1958, the year NASA was created (with the work of paperclip and other scientists), and the year of the Brussels World’s Fair (pictured below), in the popular charts that year were the Everly Brothers with All I Have To Do Is Dream. The setting was Zurich in Switzerland, specifically ETH Zurich (a well-known STEM university) in Switzerland, and the occasion was the Zurich ACM-GAMM Conference. In a joint project of the ACM (Association for Computing Machinery) and the GAMM (Association for Applied Mathematics and Mechanics) the proposed International Algebraic Language was approved.

Notable features of IAL included compound statements; IAL was “intended to provide convenient and concise means for expressing virtually all procedures of numerical computation while employing relatively few syntactical rules and statement types”(Ref#: A).

“The first ALGOL 58 compiler was completed by the end of 1958 by Friedrich L. Bauer, Hermann Bottenbruch, Heinz Rutishauser, and Klaus Samelson for the Z22 computer. Bauer et al. had been working on compiler technology for the Sequentielle Formelübersetzung (i.e. sequential formula translation) in the previous years”(Source: Wikipedia).

Key People

John (J. W.) Backus a programming language designer at IBM.

Peter Naur danish computer scientist “dataologist” and a Turing Award winner, known for the Backus–Naur form (along with John Backus of ALGOL fame)he contributed to the creation of the ALGOL 60 programming language.

There are three flavors of ALGOL which take their names from their years of instantiation:

ALGOL 58 (IAL)

“ALGOL 58, originally known as IAL, is one of the family of ALGOL computer programming languages. It was an early compromise design soon superseded by ALGOL 60.”

ALGOL 60

“ALGOL 60 […] followed on from ALGOL 58 which had introduced code blocks and the begin and end pairs for delimiting them. ALGOL 60 was the first language implementing nested function definitions with lexical scope. It gave rise to many other programming languages, including CPL, Simula, BCPL, B, Pascal and C.”

Code Example (ICT 1900 series variety)

|

1 2 3 4 5 6 7 |

'PROGRAM' (HELLO) 'BEGIN' 'COMMENT' OPEN QUOTE IS '(', CLOSE IS ')', PRINTABLE SPACE HAS TO BE WRITTEN AS % BECAUSE SPACES ARE IGNORED; WRITE TEXT('('HELLO%WORLD')'); 'END' 'FINISH' |

For a variety of reasons, ALGOL 60 was never widely used, and other languages of the time were generally preferred.

ALGOL 68

ALGOL 68 was designed to be a successor to the ALGOL 60 programming language, with the goal of expanding its scope of application and providing a more precisely defined syntax and semantics. The language’s definition, however, was highly complex, spanning hundreds of pages with unconventional terminology, making compiler implementation a challenge. As a result, ALGOL 68 was said to have “no implementations and no users”, which was only partially accurate. Despite this, the language found limited use in certain niche markets, such as in the United Kingdom where it was widely used on ICL machines, and as a tool for teaching computer science. However, outside of these communities, its usage was relatively limited.

ICL (International Computers Limited) was a UK-based computer company that used ALGOL 68 as one of the primary programming languages on its mainframe computers in the 1970s and 1980s. ICL machines were widely used in government, academic, and research institutions in the UK and ALGOL 68 was well-suited to the high-level mathematical and scientific computing tasks that were common on these systems. The language’s support for complex data structures and its high-level, expressive syntax made it popular among ICL users for a variety of applications, including scientific simulations, data analysis, and numerical computations.

The use of ALGOL 68 on ICL machines was significant because it helped establish the language as a viable option for scientific computing, despite its reputation for being difficult to implement. The combination of ICL’s hardware and the capabilities of ALGOL 68 made the company a leader in the field of high-performance computing in the UK during this time period. Although the popularity of ALGOL 68 eventually declined with the rise of more widespread programming languages such as C and FORTRAN, its use on ICL machines helped to establish the language as an important part of the history of computer science.

(Wikipedia/ChatGPT).

JOVIAL

JOVIAL, short for Jules’ Own Version of the International Algebraic Language (developed by a guy called Jules Schwartz), is a high-level programming language that was developed by a team at System Development Corporation (SDC) in the late 1950s. JOVIAL was designed specifically for applications in military aircraft control systems and was commissioned by the United States Air Force (USAF). The language was developed with the goal of being a robust and reliable tool for composing software for the electronics of military aircraft, making it well-suited for use in mission-critical scenarios.

JOVIAL was developed as a “high-order” programming language, meaning that it was designed to allow for the creation of high-level abstractions and was optimized for readability and maintainability. This made JOVIAL a popular choice for aircraft control systems, where the software needed to be both safe and dependable. JOVIAL systems were used for many years in actual air traffic control systems, making them a critical component of the infrastructure that supported military aviation.

In this way, JOVIAL can be compared to the modern programming language ADA, which was also designed for use in critical systems and is widely used in safety-critical industries such as aerospace, defense, and transportation. The development of JOVIAL was a major step forward in the evolution of high-level programming languages and its continued use in aircraft control systems is a testament to its robustness and reliability.

References

A: http://www.softwarepreservation.org/projects/ALGOL/paper/Backus-Syntax_and_Semantics_of_Proposed_IAL.pdf

B: https://dl.acm.org/citation.cfm?doid=367236.367262

C: http://slideplayer.com/slide/6370501/

D: https://en.wikipedia.org/wiki/JOVIAL

COBOL (1959)

In April 1959, Mary K. Hawes, who had identified the need for a common business language in accounting, called a meeting of representatives from academia, computer users, and manufacturers at the University of Pennsylvania. The meeting focused on strategies to get agreement on a common business computer language.

“Mary Hawes, a Burroughs Corporation programmer, called in March 1959 for computer users and manufacturers to create a new computer language—one that could run on different brands of computers and perform accounting tasks such as payroll calculations, inventory control, and records of credits and debits” (Ref#: A).

Representatives present that day included Grace Hopper, Jean Sammet, and Saul Gorn.

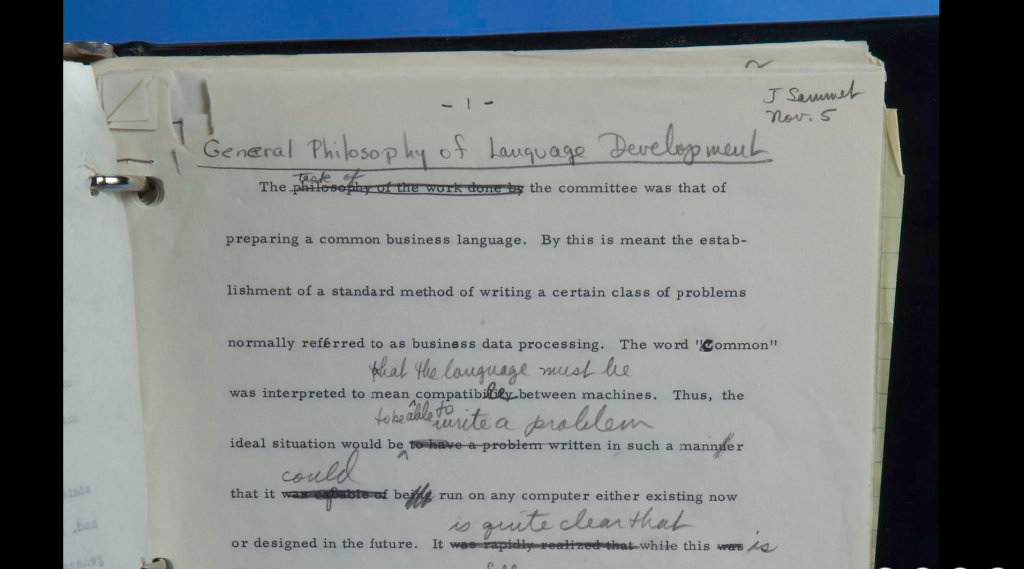

What they eventually would come up with was COBOL or the “COmmon, Business-Oriented Language”. Yet, in fact, the First Draft of COBOL, was produced in November 1959.

“During 1959 the first plans for the computer language COBOL emerged as a result of meetings of several committees and subcommittees of programmers from American business and government. This heavily annotated typescript was prepared during a special meeting of the language subcommittee of the Short-Range Committee held in New York City in November. COBOL programs would actually run the following summer, and the same program was successfully tested on computers of two different manufacturers in December 1960.”

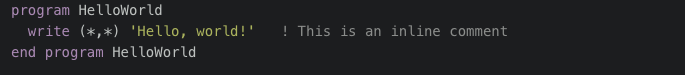

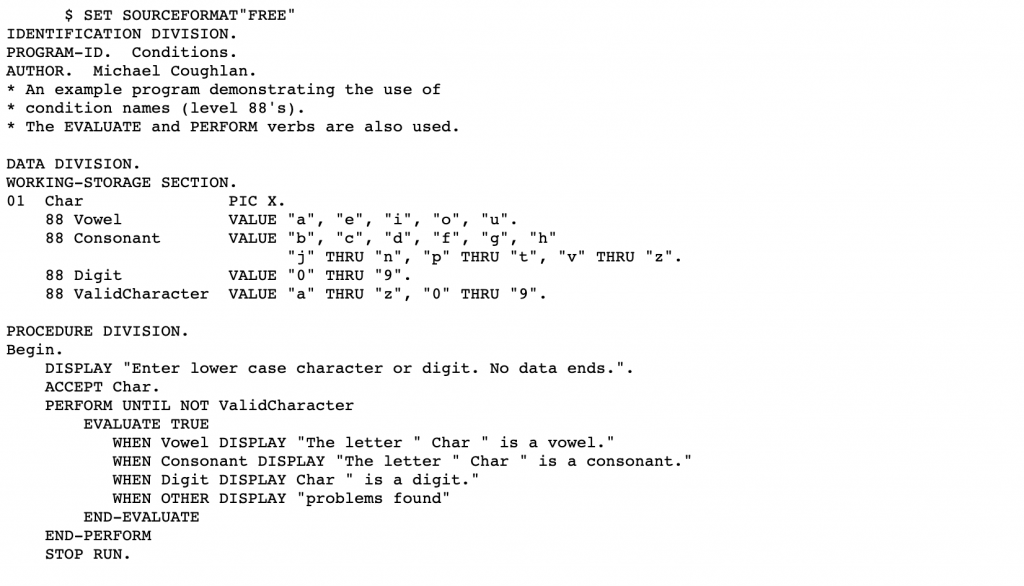

COBOL Example Code

SOURCE: B

References

A: http://americanhistory.si.edu/cobol/introduction

B: http://www.csis.ul.ie/cobol/examples/Conditn/Conditions.htm

test edit is saving 060220

Simula (1964-67)

In tracing the evolution of Object-Oriented Programming (OOP) languages, many believe Simula is an important milestone. Developed in the 1960s at the Norwegian Computing Center in Oslo by Ole-Johan Dahl and Kristen Nygaard, Simula encompasses two iterations: Simula I and Simula 67. Syntactically, it stands as a comprehensive superset of ALGOL 60, while also bearing influences from Simscript.

Originally conceived as a tool for discrete event simulation, Simula underwent subsequent expansion and reimplementation to evolve into a robust general-purpose programming language. Simula 67 notably introduced several groundbreaking concepts to the programming world. These include objects, classes, inheritance, subclasses, virtual procedures, coroutines, discrete event simulation mechanisms, and the incorporation of garbage collection. Additionally, it pioneered various forms of subtyping beyond merely inheriting subclasses.

As its name suggests, Simula was designed for doing simulations, and the needs of that domain provided the framework for many of the features of object-oriented languages today.

Simula’s versatility is manifest in its diverse applications. It has been employed in simulating VLSI designs, process modeling, protocol development, algorithmic studies, as well as in typesetting, computer graphics, and educational contexts. Despite its foundational significance, the influence of Simula is occasionally overlooked. Nevertheless, its concepts have been reimagined and incorporated into subsequent languages like C++, Object Pascal, Java, and C#. Esteemed computer scientists, including Bjarne Stroustrup, the progenitor of C++, and James Gosling, the architect of Java, have publicly recognized Simula’s seminal influence on their work (Wikipedia).

Example Code

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 |

Begin Integer Procedure GCD(M, N); Integer M, N; Begin While M<>N do If M<N then N := N - M else M := M - N; GCD := M End of GCD; Integer A, B; OutText("Enter an integer number: "); OutImage; A := InInt; OutText("Enter an integer number: "); OutImage; B := InInt; OutText("Greatest Common Divisor of your numbers is "); OutInt(GCD(A,B), 4); OutImage; End of Program; |

Classes

“A central new concept in SIMULA 67 is the “object”. An object is a self-contained program (block instance), having its own local data and actions defined by a “class declaration”. The class declaration defines a program (data and action) pattern, and objects conforming to that pattern are said to “belong to the same class””(Ref#: B).

|

1 2 3 4 5 |

class order(number); integer number; begin integer number of units, arrival date; real processing time; end; ! We can make a new instance of order thus: "new order(103)"; |

Simula introduced the concept of Classes which was later picked up by a lot of future OO Programming Languages.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 |

Class Rectangle (Width, Height); Real Width, Height; ! Class with two parameters; Begin Real Area, Perimeter; ! Attributes; Procedure Update; ! Methods (Can be Virtual); Begin Area := Width * Height; Perimeter := 2*(Width + Height) End of Update; Boolean Procedure IsSquare; IsSquare := Width=Height; Update; ! Life of rectangle started at creation; OutText("Rectangle created: "); OutFix(Width,2,6); OutFix(Height,2,6); OutImage End of Rectangle; |

References

A: http://staff.um.edu.mt/jskl1/talk.html

B: http://simula67.at.ifi.uio.no/Archive/Intro-simula/intro.pdf

C: http://staff.um.edu.mt/jskl1/talk.html

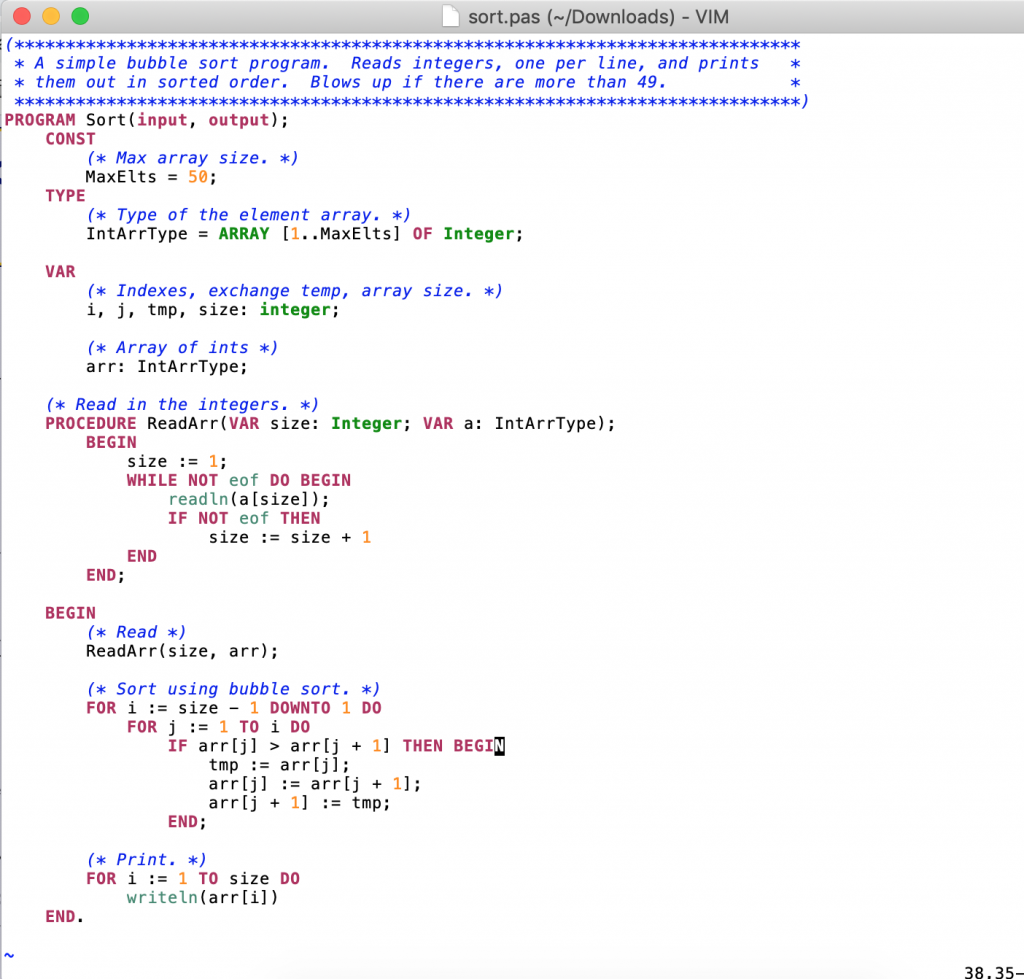

Pascal (1968/70)

“Pascal is an imperative and procedural programming language, which Niklaus Wirth designed in 1968–69 and published in 1970, as a small, efficient language intended to encourage good programming practices using structured programming and data structuring. It is named in honor of the French mathematician, philosopher and physicist Blaise Pascal. “”

Key People

Niklaus Wirth

“From 1963 to 1967 he served as assistant professor of Computer Science at Stanford University and again at the University of Zurich. Then in 1968 he became Professor of Informatics at ETH Zürich, taking two one-year sabbaticals at Xerox PARC in California (1976–1977 and 1984–1985). Wirth retired in 1999″ (Wikipedia).

Example Pascal Code

back to index

Prolog (~1972)

“Prolog is a logic programming language associated with artificial intelligence and computational linguistics.

Prolog has its roots in first-order logic, a formal logic, and unlike many other programming languages, Prolog is intended primarily as a declarative programming language: the program logic is expressed in terms of relations, represented as facts and rules. A computation is initiated by running a query over these relations.

Key People

The language was first conceived by Alain Colmerauer and his group in Marseille, France, in the early 1970s and the first Prolog system was developed in 1972 by Colmerauer with Philippe Roussel.

Prolog was one of the first logic programming languages, and remains the most popular among such languages today, with several free and commercial implementations available. The language has been used for theorem proving, expert systems, term rewriting, type systems, and automated planning, as well as its original intended field of use, natural language processing. Modern Prolog environments support the creation of graphical user interfaces, as well as administrative and networked applications.

Prolog is well-suited for specific tasks that benefit from rule-based logical queries such as searching databases, voice control systems, and filling templates” ().

|

1 2 3 4 5 6 7 8 |

partition(_,[],[],[]). partition(P,[H|T],[P|L],R) :- P <= H, partition(P,T,L,R). partition(P,[H|T],L,[P|R]) :- P > H, partition(P,T,L,R). quicksort([],[]). quicksort([P|T],Sorted) :- partition(P,T,L,R), quicksort(L,L1), quicksort(R,R1), append(L1,[P|R1],Sorted). |

The C Programming Language (1972)

“C is a general-purpose, imperative computer programming language, supporting structured programming, lexical variable scope and recursion, while a static type system prevents many unintended operations”(Wikipedia). “The C programming language was devised in the early 1970s as a system implementation language for the nascent Unix operating system. Derived from the typeless language BCPL, it evolved a type structure; created on a tiny machine as a tool to improve a meager programming environment, it has become one of the dominant languages of today” (Ref#: G).

The C language is considered low-level since it allows (indeed it sometimes requires) for manual memory management. As such there’s no built-in garbage-collection mechanism, however, on the plus side, it can allow for better optimizations being closer to machine code (the language that a given processor speaks). It’s used for systems and general programming due to it being typically fast and efficient.

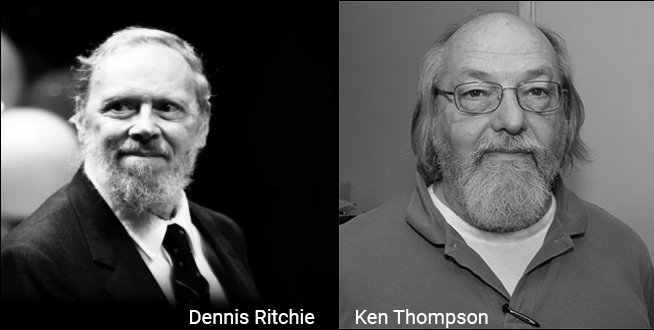

Key People

Dennis Ritchie

Ritchie worked for Bell Labs (AT&T) in the 1960s, and along with several other employees of Bell Labs (AT&T), on a project called Multics (this was conceived as a time-sharing operating system), originally meant to be a contract to fulfill a need from General Electric. The Multics C compiler was primarily developed to facilitate the porting of third-party software to Multics. In 1969 AT&T (Bell Labs) withdrew from the project because the project could not produce an economically useful system.

Brian Kernighan worked with Ritchie and Thompson at Bell Labs. Following on from their work at Bell Labs a book was created called “The C Programming Language, 1st edition”. Written by Brian Kernighan and Dennie Ritchie it became the classic text of the language (Ref#: A), although Brian clarified that he was no the creator of the C language but rather a fan of it.

Features

- Portable

- Powerful

- Fast and Efficient

- Modularity

- Platform Dependent

- Use of pointers

- Middle Level

- Rich Library

- Extensible

- Structure

- Supports recursion

It is a robust language with a rich set of built-in functions and operators that can be used to write any complex program. The C compiler combines the capabilities of an assembly language alongside some of the features of a high-level language. Programs written in C are efficient and fast due to its variety of data types and powerful operators. A C program is basically a collection of functions that are supported by C library. We can also create our own function and add it to C library. C language is the most widely used language in operating systems and embedded system development today.

Links to The Unix Operating System

Unix was written in C programming language. with both UNIX and the C programming language being developed by AT&T / Bell Labs. In fact, the UNIX project was started in 1969 by Ken Thompson and Dennis Ritchie.

Code Examples

Hello World Example

|

1 2 3 4 5 6 7 |

#include <stdio.h> int main() { // printf() displays the string inside the quotation marks printf("Hello, World!"); return 0; }<code> |

Another Example

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 |

// Find the ASCII Value of a given Character #include <stdio.h> int main() { char c; printf("Enter a character: "); // Reads character input from the user scanf("%c", &c); // %d displays the integer value of a character // %c displays the actual character printf("ASCII value of %c = %d", c, c); return 0; } |

References

A: https://www.codingunit.com/the-history-of-the-c-language

B: https://en.wikipedia.org/wiki/Multics

C: https://en.wikipedia.org/wiki/C_(programming_language)

D: https://en.wikipedia.org/wiki/Unix

E: https://www.studytonight.com/c/features-of-c.php

F: https://www.cs.cmu.edu/~guna/15-123S11/Lectures/Lecture01.pdf

G: https://www.jslint.com/chistory.html

H: https://www.programiz.com/c-programming/examples/

I: Brian Kernighan: UNIX, C, AWK, AMPL, and Go Programming | AI Podcast #109 with Lex Fridman [Internet Video]. Sourced from https://www.youtube.com/watch?v=O9upVbGSBFo on 20th July 2020.

ML (1973)

ML is a functional programming language which was developed by Robin Milner and others at the Edinburgh Laboratory for Computer Science in Scotland. The purpose of ML was to create a language that was a better “theorem prover” than Lisp, where Lisp was found by Milner to often make mistakes when used for this function.

“ML (“Meta Language”) is a general-purpose functional programming language. It has roots in Lisp, and has been characterized as “Lisp with types“.[citation needed] ML is a statically-scoped functional programming language like Scheme. It is known for its use of the polymorphic Hindley–Milner type system, which automatically assigns the types of most expressions without requiring explicit type annotations, and ensures type safety – there is a formal proof that a well-typed ML program does not cause runtime type errors. ML provides pattern matching for function arguments, garbage collection, imperative programming, call-by-value and currying. It is used heavily in programming language research and is one of the few languages to be completely specified and verified using formal semantics. Its types and pattern matching make it well-suited and commonly used to operate on other formal languages, such as in compiler writing, automated theorem proving, and formal verification” (Ref#: A).

Aside on Hindley-Milner Type Inference

The “Hindley–Milner (HM) type system is a classical type system for the lambda calculus with parametric polymorphism. It is also known as Damas–Milner or Damas–Hindley–Milner. … Luis Damas contributed a close formal analysis and proof of the method in his PhD thesis” (Wikipedia).

“HM has been rediscovered many times by many people. Curry used it informally in the 1950’s (perhaps even the 1930’s). He wrote it up formally in 1967 (published 1969). Hindley discovered it independently in 1969; Morris in 1968; and Milner in 1978. In the realm of logic, similar ideas go back perhaps as far as Tarski in the 1920’s”(Ref#: B).

“Among HM’s more notable properties are its completeness and its ability to infer the most general type of a given program without programmer-supplied type annotations or other hints. Algorithm W is an efficient type inference method that performs in almost linear time with respect to the size of the source, making it practically useful to type large programs. HM is preferably used for functional languages. It was first implemented as part of the type system of the programming language ML. Since then, HM has been extended in various ways, most notably with type class constraints like those in Haskell.” (Wikipedia)

The algorithm in question looks to infer value types based on use. It formalizes the intuition that a type can be deduced by looking at the functionality it supports.

Algorithm W is an efficient type inference algorithm that is used to deduce the type of variables in the Hindley-Milner (HM) type system. The algorithm operates in almost linear time with respect to the size of the source code, making it a practical solution for typing large programs.

The algorithm is an important part of the HM type system, as it allows the type system to infer the most general type of a given program without the need for programmer-supplied type annotations or hints. This makes the HM type system a powerful tool for functional programming, where a strong emphasis is placed on type correctness and type inference.

Algorithm W is named after its inventor, Robin Milner, who first described the algorithm in the late 1970s. The algorithm is considered to be one of the seminal contributions to the field of type theory and type inference in computer science.

|

1 2 |

fun fac (0 : int) : int = 1 | fac (n : int) : int = n * fac (n - 1) |

Standard ML

SML or Standard ML is a modern dialect of the ML language.

Here is an extended set of Standard ML Code:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 |

(* Comments in Standard ML begin with (* and end with *). Comments can be nested which means that all (* tags must end with a *) tag. This comment, for example, contains two nested comments. *) (* A Standard ML program consists of declarations, e.g. value declarations: *) val rent = 1200 val phone_no = 5551337 val pi = 3.14159 val negative_number = ~15 (* Yeah, unary minus uses the 'tilde' symbol *) (* Optionally, you can explicitly declare types. This is not necessary as ML will automatically figure out the types of your values. *) val diameter = 7926 : int val e = 2.718 : real val name = "Bobby" : string (* And just as importantly, functions: *) fun is_large(x : int) = if x > 37 then true else false (* Floating-point numbers are called "reals". *) val tau = 2.0 * pi (* You can multiply two reals *) val twice_rent = 2 * rent (* You can multiply two ints *) (* val meh = 1.25 * 10 *) (* But you can't multiply an int and a real *) val yeh = 1.25 * (Real.fromInt 10) (* ...unless you explicitly convert one or the other *) (* +, - and * are overloaded so they work for both int and real. *) (* The same cannot be said for division which has separate operators: *) val real_division = 14.0 / 4.0 (* gives 3.5 *) val int_division = 14 div 4 (* gives 3, rounding down *) val int_remainder = 14 mod 4 (* gives 2, since 3*4 = 12 *) (* ~ is actually sometimes a function (e.g. when put in front of variables) *) val negative_rent = ~(rent) (* Would also have worked if rent were a "real" *) (* There are also booleans and boolean operators *) val got_milk = true val got_bread = false val has_breakfast = got_milk andalso got_bread (* 'andalso' is the operator *) val has_something = got_milk orelse got_bread (* 'orelse' is the operator *) val is_sad = not(has_something) (* not is a function *) (* Many values can be compared using equality operators: = and <> *) val pays_same_rent = (rent = 1300) (* false *) val is_wrong_phone_no = (phone_no <> 5551337) (* false *) (* The operator <> is what most other languages call !=. *) (* 'andalso' and 'orelse' are called && and || in many other languages. *) (* Actually, most of the parentheses above are unnecessary. Here are some different ways to say some of the things mentioned above: *) fun is_large x = x > 37 (* The parens above were necessary because of ': int' *) val is_sad = not has_something val pays_same_rent = rent = 1300 (* Looks confusing, but works *) val is_wrong_phone_no = phone_no <> 5551337 val negative_rent = ~rent (* ~ rent (notice the space) would also work *) (* Parentheses are mostly necessary when grouping things: *) val some_answer = is_large (5 + 5) (* Without parens, this would break! *) (* val some_answer = is_large 5 + 5 *) (* Read as: (is_large 5) + 5. Bad! *) (* Besides booleans, ints and reals, Standard ML also has chars and strings: *) val foo = "Hello, World!\n" (* The \n is the escape sequence for linebreaks *) val one_letter = #"a" (* That funky syntax is just one character, a *) val combined = "Hello " ^ "there, " ^ "fellow!\n" (* Concatenate strings *) val _ = print foo (* You can print things. We are not interested in the *) val _ = print combined (* result of this computation, so we throw it away. *) (* val _ = print one_letter *) (* Only strings can be printed this way *) val bar = [ #"H", #"e", #"l", #"l", #"o" ] (* SML also has lists! *) (* val _ = print bar *) (* Lists are unfortunately not the same as strings *) (* Fortunately they can be converted. String is a library and implode and size are functions available in that library that take strings as argument. *) val bob = String.implode bar (* gives "Hello" *) val bob_char_count = String.size bob (* gives 5 *) val _ = print (bob ^ "\n") (* For good measure, add a linebreak *) (* You can have lists of any kind *) val numbers = [1, 3, 3, 7, 229, 230, 248] (* : int list *) val names = [ "Fred", "Jane", "Alice" ] (* : string list *) (* Even lists of lists of things *) val groups = [ [ "Alice", "Bob" ], [ "Huey", "Dewey", "Louie" ], [ "Bonnie", "Clyde" ] ] (* : string list list *) val number_count = List.length numbers (* gives 7 *) |

More Examples Here: https://learnxinyminutes.com/docs/standard-ml/

References

A: https://en.wikipedia.org/wiki/ML_(programming_language)

B: https://www.cs.cornell.edu/courses/cs3110/2019sp/textbook/interp/inference.html

ADA (~1978/79 onwards)

ADA is a high-level language whose main use is in defense applications.

As the sun set on the late 1970s, the world was swept away by the “sweet” melodies of the Bee Gees’ hit song “How Deep is Your Love”. Meanwhile, in a small corner of the computer science world, a group of passionate engineers were deeply devoted to a different kind of love – the love of solving complex programming problems.

The United States Department of Defense had issued a challenge to the world, seeking a new language to tackle its legacy code issues and other programming difficulties. It was in this arena that the team of computer enthusiasts found their calling, and poured their hearts into creating a language that would rise to the top.

One of the competition entries caught the eye of the judges, a language that was elegant, robust, and designed specifically for mission-critical systems. And so, the love affair between ADA and the DOD began, a relationship that would endure for decades to come, as ADA became the programming language of choice for some of their key systems.

It had started with a group of dedicated computer scientists in France who had been busy at work, tasked with solving a critical challenge faced by the United States Department of Defense. The DOD, burdened with a vast array of over 450 programming languages, sought to streamline its system and find a single, stable and type-safe solution.

The call for a new programming language was answered by a team led by the brilliant French computer scientist Jean Ichbiah of CII Honeywell Bull, a company with roots dating back to 1931. Under contract with the DoD, Ichbiah and his team worked tirelessly to create a language that would meet the needs of the military, culminating in the proposed language, “Green”.

Their hard work paid off as “Green” emerged victorious in the DOD competition, and was eventually named Ada, in honor of Ada Lovelace, the pioneering “computer programmer” who lived in the 19th century.

ADA creates solutions to many of the same tasks as could do implemented in the likes of C or C++, however, it has one of the best type-safety systems available in a statically typed programming language – making it “safer”. In 1987, the DOD began to require the use of Ada for every software project where new code was going to make up more than 30% of the project, though exceptions to this rule were often granted. In 1997, the DoD Ada mandate was effectively removed as the DoD began to embrace more commercial off-the-shelf technology as opposed to always developing custom solutions in each use case.

ADA went on to be used in a number of safety-critical systems, including everything Boeing jetliners to missile systems. So that lack of “crashability” of programs written in ADA (due to it being strongly-typed) was naturally super important to clients who wanted to use it in these critical systems.

Key People

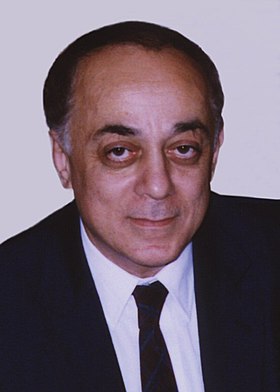

Jean Ichbiah (1940 – 2007) was French with Jewish origins having been descended from Greek and Turkish Jews from the area of Thessaloniki who had previously emigrated to France.

“Ichbiah’s team submitted a language design labeled “Green” to a competition to choose the United States Department of Defense’s embedded programming language. When Green was selected in 1978, he continued as chief designer of the language, now named “Ada”. In 1980, Ichbiah left CII-HB and founded the Alsys corporation in La Celle-Saint-Cloud, which continued language definition to standardize Ada 83, and later went into the Ada compiler business, also supplying special validated compiler systems to NASA, the US Army, and others. He later moved to the Waltham, Massachusetts subsidiary of Alsys” (Ref#: A).

Code Example (ADA 95)

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 |

-- This program will calculate the number of days old you are. with Ada.Text_IO, Ada.Integer_Text_IO; use ADa.Text_IO, Ada.Integer_Text_IO; procedure Age is LOW_YEAR : constant := 1880; MAX : constant := 365.0 * (2100 - LOW_YEAR); type AGES is delta 1.0 range -MAX..MAX; Present_Age : AGES; package Fix_IO is new Ada.Text_IO.Fixed_IO(AGES); use Fix_IO; type DATE is record Month : INTEGER range 1..12; Day : INTEGER range 1..31; Year : INTEGER range LOW_YEAR..2100; Days : AGES; end record; Today : DATE; Birth_Day : DATE; procedure Get_Date(Date_To_Get : in out DATE) is Temp : INTEGER; begin Put(" month --> "); loop Get(Temp); if Temp in 1..12 then Date_To_Get.Month := Temp; exit; -- month OK else Put_Line(" Month must be in the range of 1 to 12"); Put(" "); Put(" month --> "); end if; end loop; Put(" "); Put(" day ----> "); loop Get(Temp); if Temp in 1..31 then Date_To_Get.Day := Temp; exit; -- day OK else Put_Line(" Day must be in the range of 1 to 31"); Put(" "); Put(" day ----> "); end if; end loop; Put(" "); Put(" year ---> "); loop Get(Temp); if Temp in LOW_YEAR..2100 then Date_To_Get.Year := Temp; exit; -- year OK else Put_Line(" Year must be in the range of 1880 to 2100"); Put(" "); Put(" year ---> "); end if; end loop; Date_To_Get.Days := 365 * AGES(Date_To_Get.Year - LOW_YEAR) + AGES(31 * Date_To_Get.Month + Date_To_Get.Day); end Get_Date; begin Put("Enter Today's date; "); Get_Date(Today); New_Line; Put("Enter your birthday;"); Get_Date(Birth_Day); New_Line(2); Present_Age := Today.Days - Birth_Day.Days; if Present_Age < 0.0 then Put("You will be born in "); Present_Age := abs(Present_Age); Put(Present_Age, 6, 0, 0); Put_Line(" days."); elsif Present_Age = 0.0 then Put_Line("Happy birthday, you were just born today."); else Put("You are now "); Put(Present_Age, 6, 0, 0); Put_Line(" days old."); end if; end Age; |

Source: Ref C

Polymorphism in Ada

Prior to ADA 95, there were some aspects of object-oriented languages that Ada did not explicitly support out of the box, including inheritance and polymorphism, but it was still possible to implement these in the language by adding extra code (https://dl.acm.org/doi/abs/10.1145/142003.142005).

“The full power of object orientation is realized by polymorphism, class-wide programming and dynamic dispatching…” (Ref#: D).

“In 1995 facilities were added to Ada to easily support inheritance. Inheritance lets us define new types as extensions of existing types; these new types inherit all the operations of the types they extend.”

[TODO: Add example code demonstrating this.]

ADA 95 Example

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 |

package Figures is -- Package to demonstrate Object Orientation. type Point is record X, Y: Float; end record; type Figure is tagged record Start : Point; end record; function Area (F: Figure) return Float; function Perimeter (F:Figure) return Float; procedure Draw (F: Figure); type Circle is new Figure with record Radius: Float; end record; function Area (C: Circle) return Float; function Perimeter (C: Circle) return Float; procedure Draw (C: Circle); type Rectangle is new Figure with record Width: Float; Height: Float; end record; function Area (R: Rectangle) return Float; function Perimeter (R: Rectangle) return Float; procedure Draw (R: Rectangle); type Square is new Rectangle with null record; end Figures; |

Source: https://dwheeler.com/lovelace/s7s2.htm

References

A: https://www.revolvy.com/page/Jean-Ichbiah

B: http://acqnotes.com/subcategory/software-management/page/4

C: https://perso.telecom-paristech.fr/pautet/Ada95/chap16.htm

D: https://en.wikibooks.org/wiki/Ada_Programming/Object_Orientation

back to index

C++ / “C Plus Plus” (1979)

To explore C++ we must travel back, back further indeed through some sort of Psychedelic wormhole, floating then all the way back to the 1970s. Amongst the youth of the day the fashion was polyester, bright colors, flares, tight-fitting pants, and platform shoes.

Then to 1979, Britain’s first female prime minister Margaret Thatcher was elected, cult TV series Tales of the Unexpected began to show, and in the British pop charts was everything from the Village People (YMCA), to The Police (as the culture that became the 1980s took hold).

Meanwhile, in Cambridge, a dude called Bjarne Stroustrup was up to something, something he called “C with Classes”, in fact, this danish computer scientist had come up with what was to turn into C++ as we know it today. Stroustrup recalls that “C++ was designed to provide Simula’s facilities for program organization together with C’s efficiency and flexibility for systems programming. ” (http://www.stroustrup.com/hopl2.pdf).

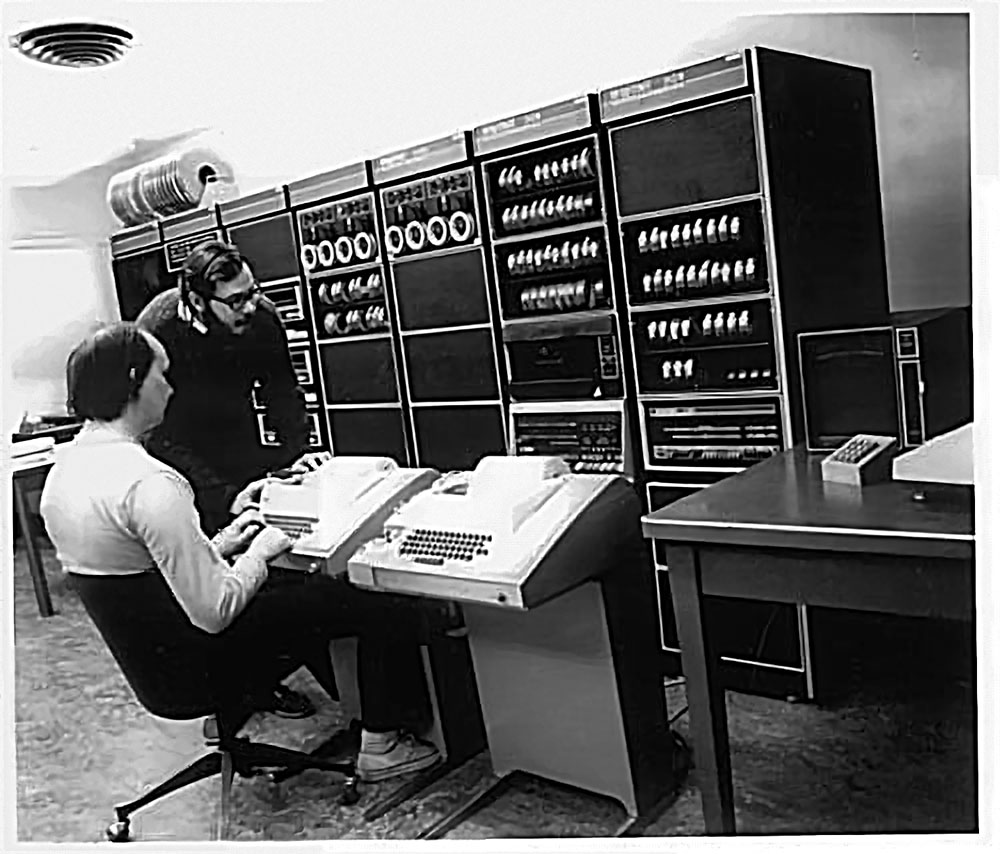

The below image is probably the computer Stroustrup used at the time (although not with him), an IBM 370/165 which was installed at the Cambridge University Computing Service in 1971 (so I believe this is likely what he used), although he calls this an “IBM 360/165” in a document he published (C++Ref#A).

Apparently the genesis of the ideas that were to lead to the creation of C++ occurred to Stroustrup during his Ph.D at Cambridge University apparently from a sort of side product to work for his thesis, a type of compiler written in the Simula programing language (Simula was a language developed in the mid-60s and based on ALGOL but with lots of extra features, designed for simulation, it is often seen as perhaps the first OO language). (C++Ref#:B)

Now, this dude loved the features of Simula but he thought that it would be super cool if he could combine some of the structure of Simula into maybe a faster C based programming language, as he also loved the low-level micro-code machine code level.

Example C++ Code

|

1 2 3 4 5 6 7 8 9 10 11 12 |

//inclcude headers; these are modules that include function that you may use in your //program #include <iostream> using namespace std; int main() { //print output to the user cout << "Hello World!" << endl; return 0; } |

Source: http://www.codebind.com/cpp-tutorial/cpp-hello-world-program/

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 |

int CowPlaying = -1; int GetActiveTowner(int t) { int i; for (i = 0; i < numtowners; i++) { if (towner[i]._ttype == t) return i; } return -1; } void SetTownerGPtrs(BYTE *pData, BYTE **pAnim) { int i; DWORD *pFrameTable; pFrameTable = (DWORD *)pData; for (i = 0; i < 8; i++) { pAnim[i] = CelGetFrameStart(pData, i); } } |

Source: https://github.com/diasurgical/devilutionX

Stroustrup realized that you needed a strong type system to make a language work well, but whilst he found the type systems of other languages frustrating, the ability to build our your own type system (or “classes”), as found in Simula, appealed to him.

He targeted the idea of bringing the language which is reasonably understandable by most people in the areas reached, but without losing the speed and efficiency of the raw mathematical fundamentals appealing.

He wanted to be able to make his own types based on the problem he was solving (as in Simula) so he brought this kind of OO concept into C++. He wanted the ability to tap into the strengths of things like run-time polymorphism in solving tasks.

SOURCE: https://www.youtube.com/watch?v=uTxRF5ag27A

C++, because it’s rooted on C is really considered an efficient language for real-world large-scale deployments.

It’s a language that’s great for working with low-level hardware efficiently whilst also offering great tools for abstraction. It also derived OO concepts from Simula like with the virtual functions in Simula for inheritance, which was replicated into C++ using a jump-table, although the C++ version was simpler and faster such that the overhead of the OO inheritance-based computations was less when compiling though its original intermediate stage of optimized C code.

back to index

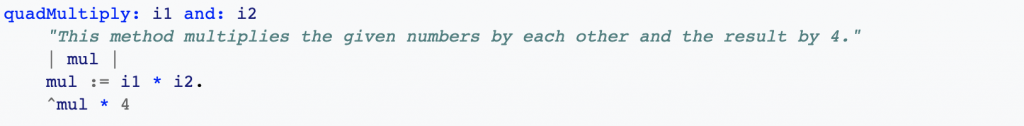

Smalltalk (1971-80)

This language is one of the root languages that lead to modern OOP programing.

“Smalltalk is an object-oriented, dynamically typed reflective programming language”.

The language was principally designed and created in part for educational use, “more so for constructionist learning, at the Learning Research Group (LRG) of Xerox PARC by Alan Kay, Dan Ingalls, Adele Goldberg, Ted Kaehler, Scott Wallace, and others during the 1970s”.

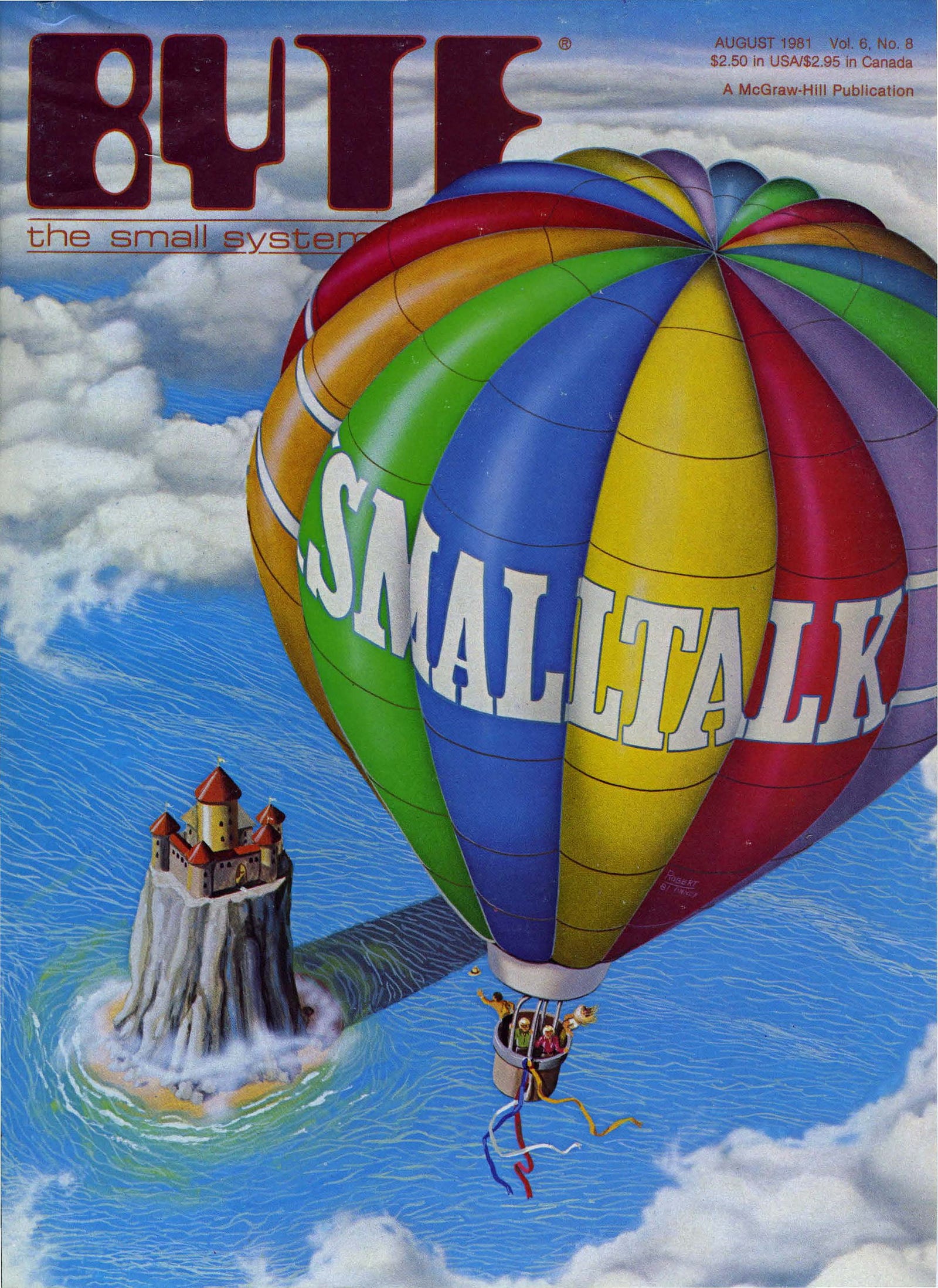

Smalltalk is often used to refer to the Smalltalk-80 Programming Language, this is perhaps the first version to be made publicly available and it was created in 1980.

“Smalltalk was the product of research led by Alan Kay at Xerox Palo Alto Research Center (PARC); Alan Kay designed most of the early Smalltalk versions, Adele Goldberg wrote most of the documentation, and Dan Ingalls implemented most of the early versions” (Wikipedia).

“The first version, termed Smalltalk-71, was created by Kay in a few mornings on a bet that a programming language based on the idea of message passing inspired by Simula could be implemented in “a page of code”. A later variant used for research work is now termed Smalltalk-72 and influenced the development of the Actor model. Its syntax and execution model were very different from modern Smalltalk variants” (Wikipedia).

“Smalltalk-80 was the first language variant made available outside of PARC, first as Smalltalk-80 Version 1, given to a small number of firms (Hewlett-Packard, Apple Computer, Tektronix, and Digital Equipment Corporation (DEC)) and universities (UC Berkeley) for peer review and implementing on their platforms. Later (in 1983) a general availability implementation, named Smalltalk-80 Version 2, was released as an image (platform-independent file with object definitions) and a virtual machine specification. ANSI Smalltalk has been the standard language reference since 1998″ (Wikipedia).

“…The sixties, particularly in the ARPA community, gave rise to a host of notions about “human-computer symbiosis” through interactive time-shared computers, graphics screens and pointing devices. Advanced computer languages were invented to simulate complex systems such as oil refineries and semi-intelligent behavior. The soon to follow paradigm shift of modern personal computing, overlapping window interfaces, and object-oriented design came from seeing the work of the sixties as something more than a “better old thing”. That is, more than a better way: to do mainframe computing; for end-users to invoke functionality; to make data structures more abstract. Instead the promise of exponential growth in computing/$/volume demanded that the sixties be regarded as “almost a new thing” and to find out what the actual “new things” might be. For example, one would compute with a handheld “Dynabook” in a way that would not be possible on a shared mainframe; millions of potential users meant that the user interface would have to become a learning environment along the lines of Montessori and Bruner; and needs for large scope, reduction in complexity, and end-user literacy would require that data and control structures be done away with in favor of a more biological scheme of protected universal cells interacting only through messages that could mimic any desired behavior. Early Smalltalk was the first complete realization of these new points of view as parented by its many predecessors in hardware, language and user interface design. It became the exemplar of the new computing, in part, because we were actually trying for a qualitative shift in belief structures—a new Kuhnian paradigm in the same spirit as the invention of the printing press—and thus took highly extreme positions which almost forced these new styles to be invented” (summarized abstract from Kay. A.C. (1993). The Early History of Smalltalk).

Smalltalk was one of many Object-Oriented programming languages based on Simula.

“””

A Smalltalk object can do exactly three things:

- Hold state (references to other objects).

- Receive a message from itself or another object.

- In the course of processing a message, send messages to itself or another object.

“””

Smalltalk was very influential on subsequent programming languages including Objective-C, Java, Python, and Ruby. Smalltalk was actually a kind of side product of a lot of wider ARPA funded research that was done by this team.

Code Example

References

A: https://medium.com/learn-how-to-program/chapter-2-introducing-smalltalk-b00cec93b25f

B: https://github.com/adambard/learnxinyminutes-docs/blob/master/smalltalk.html.markdown

Objective-C (1980s)

This programming language dates back to the 1980s with a company called NeXT. The language took C and added in some Smalltalk like messaging elements. It went on to be used by Apple when Apple took over some of the work, slightly before but kinda around the same time that Steve Jobs moved back over to working for Apple and to again lead it in a new direction.

Key People

Some people behind this language were a couple of gentlemen called Brad Cox and Tom Love, initially linked to a company called Stepstone (Productivity Products International), the rights were then acquired in ’95 by NeXT computer, and subsequently, the rights were transferred again, this time to Apple who would go on to popularize the language primarily through the release development framework for their operating systems to a global network of developers.

How it Developed

Drs Cox and Love had learned Smalltalk while at ITT Corporation’s Programming Technology Center in 1981. “Tom Love was the Director of the Advanced Technology Group at the Programming Technology Center(PTC), and he hired Brad Cox into that group at ITT” (ref#: C). Dr. Cox seemingly thought of what was to become Objective-C based on ideas he discovered in an August 1981 issue of Byte Magazine devoted to the topic of Smalltalk. This included, for example, an article by Larry Tesler called “The Smalltalk Environment”.

Ideas developed by Cox based on this were seen in a 1983 paper called “The Object-Oriented Precompiler: Programming Smalltalk—80 Methods in C Language”. He referred originally to the language as OOPC. Following on from his original ideas, a second generation of the language was re-build from the ground up at Schlumberger Research, subsequently, a third version of the language was totally rebooted when Love & Cox worked at Productivity Products in June of 1983 (ref#: C).

“In 1988, NeXT licensed Objective-C from StepStone (the new name of PPI, the owner of the Objective-C trademark) and extended the GCC compiler to support Objective-C. NeXT developed the AppKit and Foundation Kit libraries on which the NeXTSTEP user interface and Interface Builder were based.”().

Key Language Characteristics:

“The Objective-C model of object-oriented programming is based on message passing to object instances. In Objective-C one does not call a method; one sends a message. This is unlike the Simula-style programming model used by C++” (Wikipedia)

Example Code

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 |

// A header file might look like this #import <Foundation/Foundation.h> @interface RWTScaryBugData : NSObject @property (strong) NSString *title; @property (assign) float rating; - (id)initWithTitle:(NSString*)title rating:(float)rating; @end // An implementation file may look like this #import "RWTScaryBugData.h" @implementation RWTScaryBugData @synthesize title = _title; @synthesize rating = _rating; - (id)initWithTitle:(NSString*)title rating:(float)rating { if ((self = [super init])) { self.title = title; self.rating = rating; } return self; } @end // SOURCE: https://www.raywenderlich.com |

…

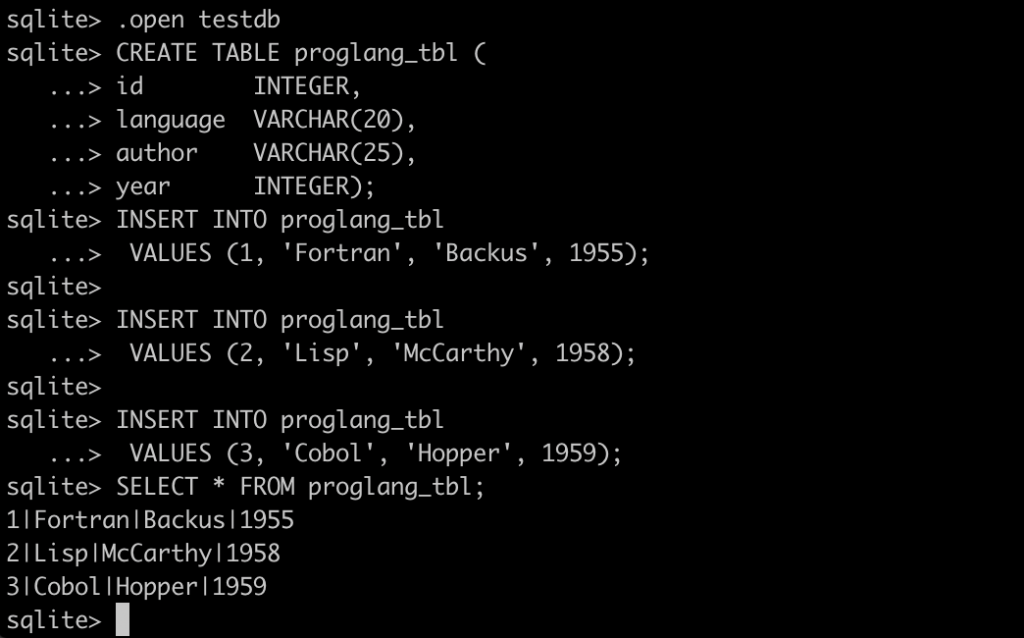

SQL “sequel” (70s-1986)

Ah, although its origins lie back into the 70s, SQL was more fully developed in 1986, the year that Kiss by Prince hit the charts. Or, not as much fun, the Soviet Nuclear reactor at Chernobyl exploded (as explored in the so-named Netflix film). SQLs has since become the de facto industry standard for relational systems.

Somewhere else in the world (i.e. IBM aka the home of business computing at the time) is developed “a domain-specific language used in programming and designed for managing data held in a relational database management system (RDBMS), or for stream processing in a relational data stream management system (RDSMS). It is particularly useful in handling structured data where there are relations between different entities/variables of the data. SQL offers two main advantages over older read/write APIs like ISAM or VSAM: first, it introduced the concept of accessing many records with one single command; and second, it eliminates the need to specify how to reach a record, e.g. with or without an index” (Wikipedia).

It’s considered a 4GL or 4th generation language in that it is made to be human-readable.

SQL data retrieval

SQL has four commands for data manipulation

SELECT: for retrieving data

INSERT: for creating data

UPDATE: for altering data

DELETE: for removing data

For example, SELECT typically has the format:

SELECT columns (or ‘*’)

FROM relation(s)

[WHERE constraint(s)] ;

Which, in a simple example, could look like:

SELECT *

FROM CUSTOMER

WHERE NAME = ‘P Abdul’;

Creating Tables

Creating tables can look like:

|

1 2 3 4 5 |

CREATE TABLE CUSTOMER (REFNO NUMBER PRIMARY KEY NOT NULL, NAME CHAR (20), ADDRESS CHAR (30), STATUS CHAR (8)); |

Key People

SQL was initially developed at IBM by Donald D. Chamberlin and Raymond F. Boyce after learning about the relational model from Ted Codd in the early 1970s. This version, initially called SEQUEL (Structured English Query Language), was designed to manipulate and retrieve data stored in IBM’s original quasi-relational database management system, System R, which a group at IBM San Jose Research Laboratory had developed during the 1970s

Donald D. Chamberlin

“Donald Chamberlin was born in 1944 in San Jose, California, and holds a B.S. in engineering from Harvey Mudd College (1966) and an M.S. (1967) and Ph.D. (1971) in electrical engineering from Stanford University. Chamberlin is best known as co-inventor of SQL (Structured Query Language), the world’s most widely used database language. Developed in the mid-1970s by Chamberlin and Raymond Boyce, SQL was the first commercially successful language for relational databases. Chamberlin was also one of the managers of IBM’s “System R” project, which produced the first SQL implementation and developed much of IBM’s relational database technology.

Chamberlin joined IBM Research at the T.J. Watson Research Center, Yorktown Heights, New York, in 1971. In 1973, he returned to San Jose, California, and continued his work at IBM’s Almaden Research Center, where he was named an IBM Fellow in 2003. In 2009, he was appointed a Regents’ Professor at UC Santa Cruz.

Chamberlin was named an ACM Fellow in 1994 and an IEEE Fellow in 2007. In 1997, he received the ACM SIGMOD Innovations Award and was elected to the National Academy of Engineering. In 2005, he was given an honorary doctorate by the University of Zurich.” (Ref#: https://www.ithistory.org/honor-roll/dr-raymond-ray-f-boyce).

Raymond F. Boyce

“In the early 1970’s, together with Donald D. Chamberlin he co-developed Structured Query Language (SQL) while managing the Relation Database development group for IBM in San Jose, California. Initially called SEQUEL (Structured English Query Language) and based on their original language called SQUARE (Specifying Queries As Relational Expressions). SEQUEL was designed to manipulate and retrieve data in relational databases. By 1974, he and Chamberlin published “SEQUEL: A Structured English Query Language” which detailed their refinements to SQUARE and introduced us to the data retrieval aspects of SEQUEL. It was one of the first languages to use Edgar F. Codd’s relational model. SEQUEL was later renamed to SQL by dropping the vowels, because SEQUEL was a trademark registered by the Hawker Siddeley aircraft company. Today, SQL has been generally established as the standard relational databases language. In 1974, he and Edgar F. Codd, co-developed the Boyce–Codd normal form (or BCNF). It is a type of normal form that is used in database normalization. The goal of relational database design is to generate a set of database schemas that store information without unnecessary redundancy. Boyce-Codd accomplishes this and allows users to retrieve information easily. Using BCNF, databases will have all redundancy removed based on functional dependencies. It is a slightly stronger version of the third normal form. He died in 1974 as a result of an aneurysm of the brain, leaving behind his wife of almost five years, Sandy, and his daughter Kristin, who was just ten months old.”

EXAMPLE SQL STATEMENTS

![]()

History of SQL

- 1970 – E.F. Codd develops the relational database concept

- 1974-1979 – System R with Sequel (later called SQL) is created at the IBM Research Lab

- 1979 – Oracle markets the first relational DB with SQL

- 1981 – SQL/DS first available RDBMS system on DOS/VSE

- Others followed: INGRES(1981), IDM(1982), DG/SGL(1984), Sybase(1986)

- 1986 – The ANSI SQL was released

- 1989, 1992, 1999, 2003, 2006, 2008 – Major ANSI standard updates

- Present Day – SQL is supported by most major database vendors

Basic Data Types in SQL

|

Character types |

char, varchar |

|

Integer values |

integer, smallint |

|

Decimal numbers |

numeric, decimal |

|

Date data type |

date |

JOINs

“It is not usually very long before a requirement arises to combine information from more than one table, into one coherent query result”.

There are various kinds of Joins but we won’t go into them all in this article, but will provide more details elsewhere.

USES

SQL is best used in running and interacting with your data layer. Whilst it is possible to put business logic into our SQL databases (and sometime Data Base Administrators or DBAs will push for this due to their bias toward it), this practice is best avoided as it is typically best to separate out the application layer from database layer. This does not mean however that we should not take advantage of careful use of things like stor procs (stored procedures) in, for example, our Microsoft SQL Server databases or similar, as this often confers good efficience benefits in terms of time-efficiency ().

Different Flavours of SQL

“Although SQL is an ANSI (American National Standards Institute) standard, there are many different versions of the SQL language.

However, to be compliant with the ANSI standard, they all support at least the major commands (such as SELECT, UPDATE, DELETE, INSERT, WHERE) in a similar manner.

Note: Most of the SQL database programs also have their own proprietary extensions in addition to the SQL standard!”(http://w3schools.sinsixx.com/sql/sql_intro.asp.htm).

https://dl.acm.org/doi/abs/10.1145/142003.142005https://dl.acm.org/doi/abs/10.1145/142003.142005

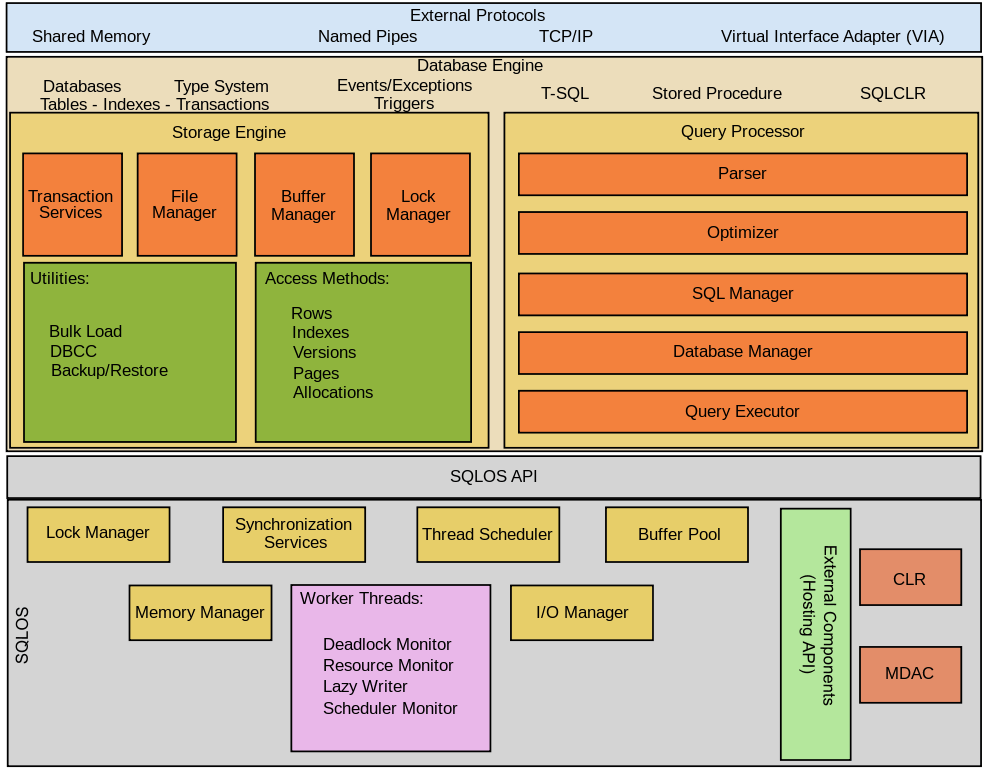

Microsoft SQL Server

SQL Server is a relational database management system (RDBMS) developed by Microsoft – Built on top of SQL, it is also tied to Transact-SQL (T-SQL), Microsoft’s own variant of SQL that adds a set of proprietary programming constructs. Its main purpose is as a database server, that is to say for storing and retrieving data requested by other applications, either locally or over a network including the internet.

Microsoft has (in the past) tried to tie down SQL Server to the Windows environment, in a similar way to their attempt to essentially create essentially their own proprietary version of Java in the form of C#, which was also geared towards tying developers to their operating systems. However in 2016, Microsoft made SQL server available on Linux, and it then became generally available in 2016 to run on both Windows and Linux.

“””

SQL Server supports different data types, including primitive types such as Integer, Float, Decimal, Char (including character strings), Varchar (variable length character strings), binary (for unstructured blobs of data), Text (for textual data) among others. The rounding of floats to integers uses either Symmetric Arithmetic Rounding or Symmetric Round Down (fix) depending on arguments: SELECT Round(2.5, 0) gives 3.

Microsoft SQL Server also allows user-defined composite types (UDTs) to be defined and used. It also makes server statistics available as virtual tables and views (called Dynamic Management Views or DMVs). In addition to tables, a database can also contain other objects including views, stored procedures, indexes and constraints, along with a transaction log. A SQL Server database can contain a maximum of 231 objects, and can span multiple OS-level files with a maximum file size of 260 bytes (1 exabyte).The data in the database are stored in primary data files with an extension .mdf. Secondary data files, identified with a .ndf extension, are used to allow the data of a single database to be spread across more than one file, and optionally across more than one file system. Log files are identified with the .ldf extension.

“””

SQL Server Data Types

Data types in SQL Server are organized into the following categories:

| Exact numerics | Unicode character strings |

| Approximate numerics | Binary strings |

| Date and time | Other data types |

| Character strings |

SOURCE: https://docs.microsoft.com/en-us/sql/t-sql/data-types/data-types-transact-sql?view=sql-server-ver15

T-SQL